In the first case were the drive is broken we will have to contact the DC to replace the drive. Once the drive is removed from the RAID array, we need to proceed with the actual process of physical disk replacement based on the scenarios mentioned earlier. Now you can remove the second drive (sdb) from the RAID array using the below commands. Step 3: Remove the failed drive from the RAID array. In some cases only one or two partition will be shown as failed, in these case the other partition should also be declared as failed using the above command for that particular drive. Something we can relate to the scenario in the first case. Now the RAID status will be as shown below. Each partition should be done separately. The following commands are used in declaring the drives as failed. Now the second drive or sdb should be declared as failed to start the process. The following command shows the drives that are part of an array. Since the drives are not broken in the second case, to remove the drive from the RAID array we will have to manually declare the drive as failed in the RAID status. I hope you should be able to distinguish between a degraded RAID and a healthy one by comparing the output of /proc/mdstat given in the two instances. The expected output for a healthy raid status is represented below with a file picture. Since there is no drive failure, the RAID state would be in an intact or healthy status. The second scenario demands removal of a healthy drive from a RAID array and its conversion for backup storage. This is perfectly in synchronization with the expected output of scenario one where a HDD of software raid got failed and we need to replace it with a healthy one.Īs mentioned in the top portion of the blog. The absence of second letter indicates an issue with drives. Here as you can see, all the three raid devices shows its state as “”. The output obtained for the server in this case was as given belowĪ missing or defective drive is shown by and/or.

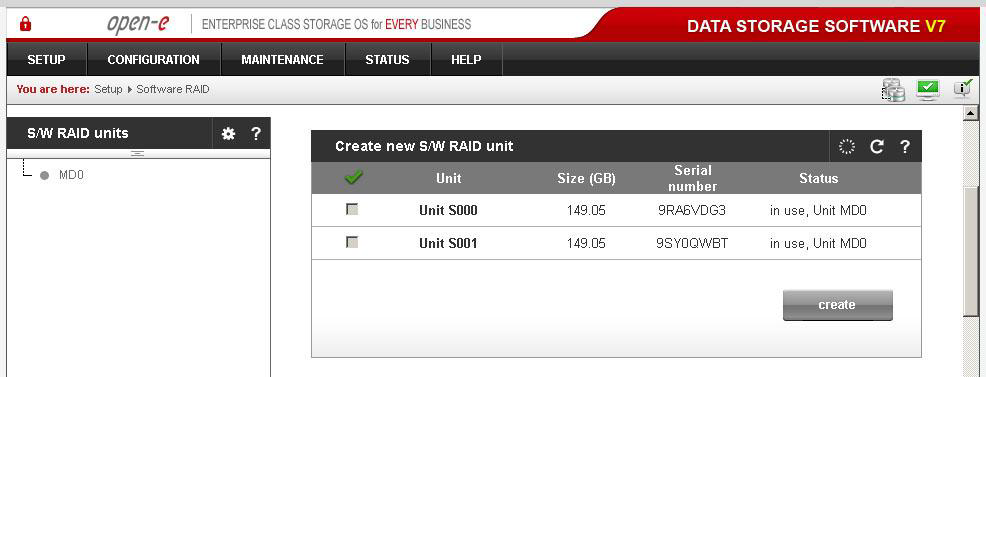

A clear picture about the current state of software raid can be obtained from the file /proc/mdstat The next crucial step is to evaluate and decide how stable these devices are. Now, we have a clear picture about the partition schema and configuration of the server. Let us use the lsblk command to have a somewhat graphical view of the setup in the server.Īs evident from the output, the software raid devices are mounted as given below /dev/md0 I believe explaining the steps involved with the faulty HDD replacement of first scenario can be sorted out from the process flow for the HDD reconfiguration of the second scenario.Ī detailed look into the RAID replace disk process is given below Step 1: RAID Health EvaluationĪ clear picture about the RAID devices and constituent drives along with the partitions is essential to proceed with the operations. So let us start with the second scenario where a healthy drive is requested to be pulled out from a RAID array and get configured as a backup drive. In the second case we can perform this without any downtimes while the server is live. In the first scenario were the drive is dead, down time is inevitable since the DataCenter has to replace the failed drive. When the client needed to remove one drive which was part of the RAID and add it as a backup drive mounted at /backup.īoth the processes are similar with minor changes in the process flow. When one of the disk failed and the failed disk had to be replaced.Ģ. The RAID replace disk have to be done when the following instance occurs,ġ. Software RAID is when the interaction of multiple drives is organized completely by software. RAID Level 1 (mirroring) achieves increased security since even if one drive fails, all the data is still stored on the second drive. There were a couple of instances where I have to break this RAID configuration.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed